TLDR;

An AI language-learning app with Next.js framework to provide a conversational partner with personalized learning.

A fine-tuned small-sized multilingual LLM is hosted with optimization techniques like quantization and adapter switching.

Features to Add Later

- Increasing performance with various prompting methods

- RAG to obtain relevant information from the entire chat history

- Online chatting with other users and group chat

Journal Entry

[Application]

This project came to my mind as I was studying spanish on my own.

I was studying the language through a textbook, along with youtube videos and podcast for listening practice.

However, because I was studying alone without a partner, I didn't have the opportunity to practice the languge in natural setting.

So, why not build an AI conversational partner that can help me practice spanish more naturally?

I wanted to try a full stack framework, so I decided on Next.js with typescript.

I also used graphQL as my api as I thought the schemas would have synergy with type safety.

I used MongoDB this time, because the data would keep getting added and I wanted to stay flexible on what would be included in the database.

With the big picture decided, I created a To-do list with detailed implementations, features to include, etc.

A claude.md file was also designed for a easier AI workflow.

First was the basic components of the chatting app.

This included a chat interface, message bubbles, date dividers, and side navigation.

Then TTS and STT features were added for listening and speaking practice.

At the moment, I used the webspeech API so the quality of the TTS is quite bad.

I will probably work on a using a better API later on.

After a had the chatting app, next was deciding what information to collect from the user to

provide a personalized learning experience.

This included a brief introduction, language level, correction style, learning goals, and interest.

(correction style was removed as the finedtuned model was not following the correction instructions well)

[AI Engineering]

Then, it was time to choose the model.

My original model was Llama 3.2 1B Instruct.

It was multilingual and small enough to be deployed sustainably.

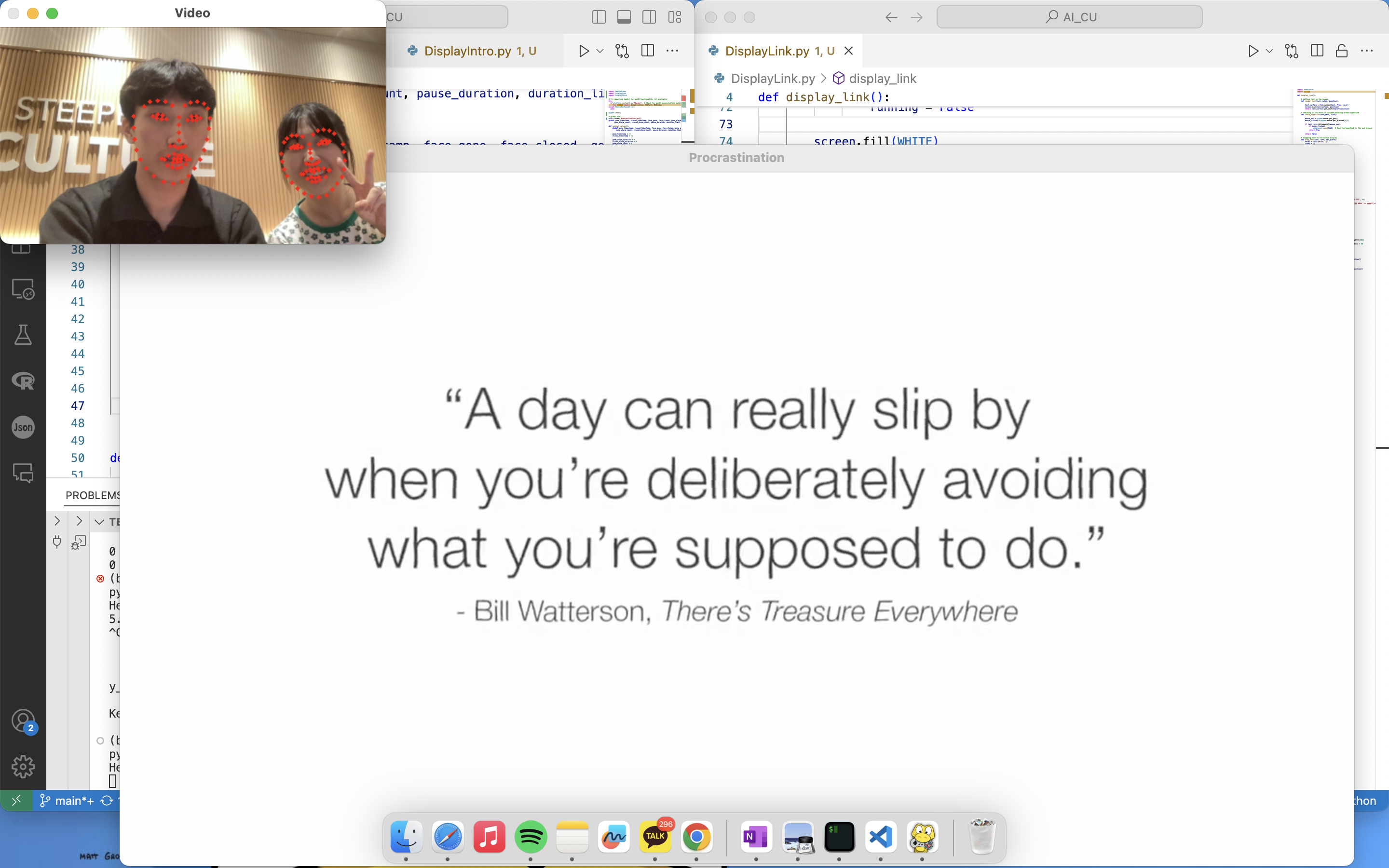

After choosing the model, I tested the model with a series of conversations that a user may have.

the response were too verbose and unnatural even after prompt engineering, so I decided to finetune the model.

At first, I wanted to finetune the model on specific characters.

For example, a user can chat with a Don Quioxte to learn spanish, or K-Drama character to learn Korean.

But when I began searching for training data, I realized it would be impossible to get large amount of quality data for it.

So, instead I decided to finetune the model per langauge with LoRA.

It would be done with 3 langauges: Korean, English, and Spanish.

I chose English and Korean because I could check the quality of the response.

I chose Spanish because I wanted to use the app myself to learn the language.

I still struggled to find multi-turn conversational data that could help with my finetuning.

So after digging through kaggle and huggingface datasets with no satisfactory results,

I decide to use synthetic data.

I spent some time thinking of different variables to make the data more diverse.

A list of various topics that users would generally be interested in, like travel or food.

Other variables included conversation length (no. of messages), conversation position, and and CEFR levels.

I also experimented with other variables like politeness and correction styles, but decided against it in the end.

After generating 2000 conversations per language, I used a the peft library in kaggle notebook to finetune the model.

(free GPUs!)

Training loss, validation loss, perplexity, and LoRA weights all showed that the model was learning well.

I chose Huggingface space to host the finetuned model, as it offered 16GB of RAM for free.

Once I set up the APIs for token streaming, adapter switching, and a simple gradio UI, I began testing the model.

Then I ran into 2 issues.

1. Despite having minimal system prompt and 1B paramter model, inference was extremely slow,

taking 5-10 minutes to generate a simple response.

2. The performance was just bad. It was subpar in English, but struggle to understand the language

or make coherent sentences in Spanish and Korean.

I initially thought there was something wrong with finetuning, but the problem persisted with

the base model as well.

(In hindsight, I should have tested the performance before finetuning...)

To solve the first issue, I looked for ways to optimize inference on CPU.

I quantized the model to Q4_K_M, tried the GGUF format and llama-cpp library, but it was still too slow.

After many tries, I decided to move on to a hosting service that provided GPU and more RAM.

Because I was no longer restrained by the resources in Huggingface space, I searched for a better model.

I ended up with Qwen2.5-7B-Instruct.

This base model initially was giving Chinese responses to Korean prompts, and I thought about adding filters for each langauge.

Fortunately, this issue was fixed with finetuning.

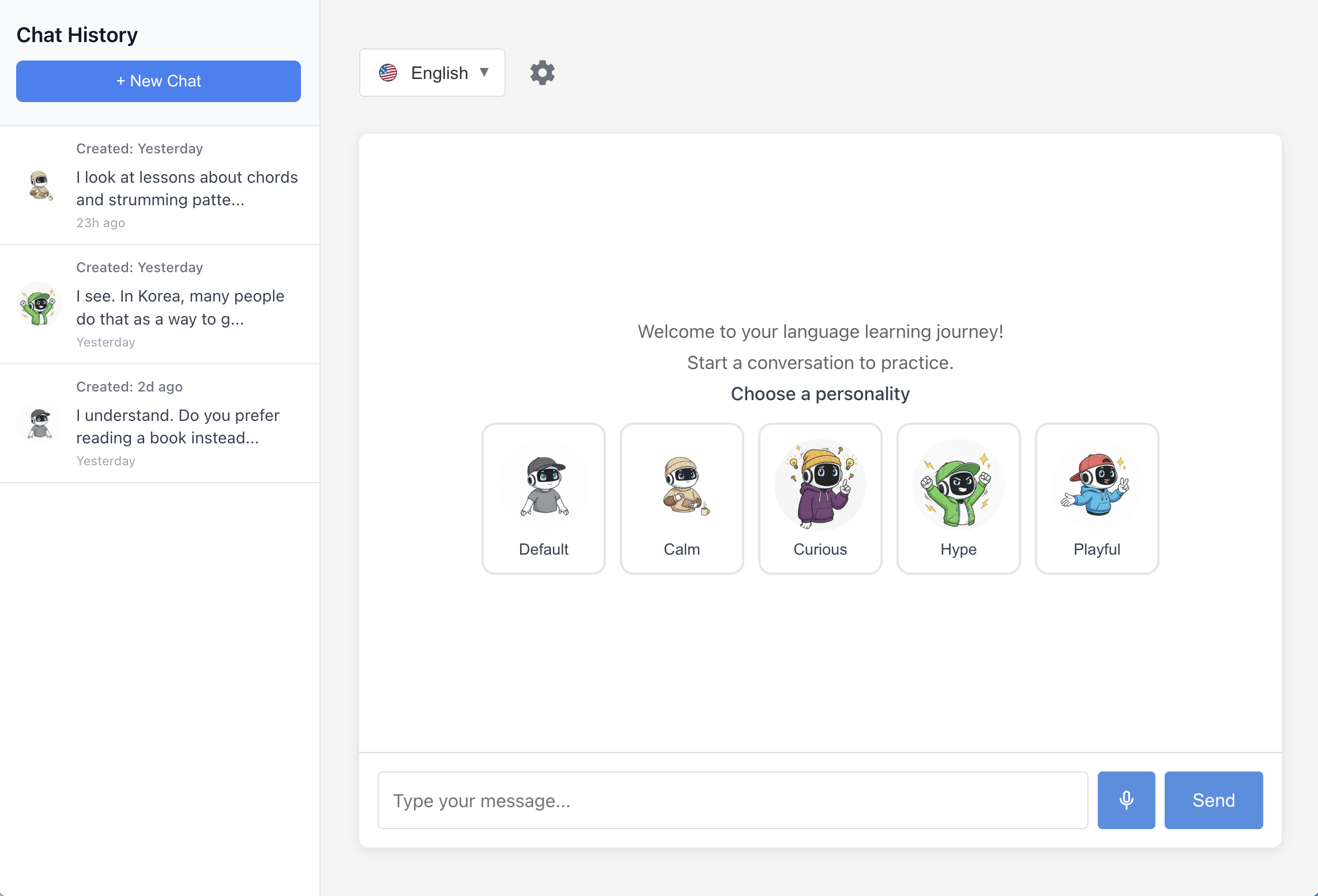

Finally, I designed a few different personalities for the chatbot to give more diverse experience for the learners.

These included a curious personality a playful one, and more.

Currently, they are done through prompt engineering, but it may change finetuning later on.

[Return to Application]

After that, it was adding the final touches, checking for errors, and deployment.

Authetication was done through Auth.js with option for Google Credentials.

Because my GraphQL subscription required a Pub/Sub, Redis was used.

This setup would also be useful later for online group chats.

Currently, the model is hosted without a container and cold starts with requests, leading to slow intially responses.

Of course, both the performance and the speed would improve by using SOTA model APIs, but I wanted to have

full control of all the parts of this project.

As of March 2026, some new features have been added.

An improve my sentence button has been added to help learners check their mistakes and improve upon them.

A translate button has also been added for bot messages to help users understand phrases that they do not know.